AI discovery in finance: Winning the shortlist in agent driven investing

In the age of Bloomberg terminals and factor screens, investment discovery used to look reassuringly mechanical: filter, sort, compare, then debate. That workflow is now being quietly re-engineered by large language models and autonomous “copilots” that turn a question into a thesis, a shortlist and a set of recommended next steps.

For asset managers and the commercial teams that sell their products, this is not just another distribution channel to optimise. It is a new gatekeeping layer. The unsettling implication is simple: a strategy can be sound, competitively priced and well performing and still fail to appear when the machine drafts the shortlist.

The shift matters because the output of these systems is not a spreadsheet. It is narrative. When an internal agent is asked to find “resilient beneficiaries of a theme”, it will often return a coherent story, a handful of names and a justification trail. In mature deployments, that trail must be auditable, defensible and compliant.

In other words, the contest is moving upstream from performance alone to legibility: whether an AI system can reliably retrieve, cite and reconcile what you claim to do, how you do it and what risks you take using sources it considers trustworthy.

From performance to presence

Asset managers have spent decades refining KPIs around flows, performance, tracking error, Sharpe ratios and client retention. Those metrics are not going away. But they are being joined by a different set of questions, closer to media measurement than portfolio analytics: are you being surfaced, how often, and in what role?

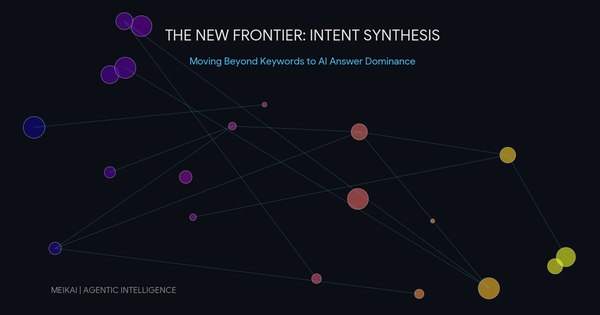

A practical framework is emerging inside institutions experimenting with agent-led research:

- Visibility: how frequently a firm or product is mentioned for relevant prompts the AI equivalent of share of voice.

- Selection: when the system provides “top options”, how often the product is included.

- Role: whether the strategy becomes the anchor of the answer or a footnote.

- Narrative accuracy: whether the model describes risk, liquidity, process and governance as intended or drifts into inference and misunderstanding.

- Source impact: which documents actually influence the outcome regulatory filings, audited reports, methodology notes, rating-agency language, or reputable research.

For distribution and product leaders, these are attractive because they are actionable. They turn a fuzzy complaint (“we’re not being recommended”) into a diagnostic map: where the model is looking, what it is reading, and where the story breaks.

Why the machines default to competitors

When two offerings are economically similar as they often are in crowded categories the agent does not “choose” in the human sense. It defaults. The winner is frequently the organisation that has made itself easier to parse.

That tends to mean: disclosures that are structured and unambiguous; consistent language across fact sheets, filings and marketing; and a dense footprint across sources the model is permitted to trust. The loser is the firm whose materials are fragmented, contradictory, heavily stylised, or buried behind unstable web pages and opaque PDFs.

This can feel unfair to portfolio managers who believe nuance is the craft. But machines do not reward nuance they cannot retrieve. If a product’s liquidity terms are described differently in separate documents, or risk is framed in marketing language that does not map cleanly to formal disclosures, the system will often hedge or exclude.

The practical result is what some executives describe, only half-jokingly, as “competitor-first defaults”: the AI repeatedly surfaces the same familiar names because they are the easiest to justify with citations.

The rise of “semantic alpha”

Finance is not short of buzzwords, and “semantic alpha” risks joining the pile. Strip the slogan away and it points to a concrete advantage: being the strategy whose thesis can be consistently retrieved and defended inside an agent’s workflow.

That advantage shows up in mundane but valuable ways. Firms that are consistently represented in the model’s trusted corpus see fewer mischaracterisations, faster internal enablement for advisors, less back-and-forth with RFP teams, and, ultimately, a smoother path from interest to allocation.

It is not about being more charismatic in marketing copy. It is about building an evidence trail that survives automated scrutiny.

A Monday-morning playbook

So what should a firm do practically if it believes agent-driven discovery will become a standard part of institutional workflow?

Build a trusted source backbone.

Start with the documents an agent can cite without creating compliance headaches: regulatory filings, audited reports, clearly structured fact sheets, methodology documents for rules-based strategies, and governance statements. Make them stable, internally consistent and easy to parse. Use predictable headings and definitions. Avoid moving URLs. Eliminate contradictions. Machines are unforgiving about document drift.

Map prompts to products.

Firms experimenting with copilots are building prompt libraries that mirror real discovery behaviour: macro scenarios (“rate cuts with sticky inflation”), themes (“AI infrastructure beneficiaries”), and constraints (“UCITS compliant, daily liquidity, drawdown limit”). The point is not to game the system with keywords; it is to define, for each cluster of questions, what it would mean to be a legitimate shortlist candidate — and to ensure the documentation supports that claim.

Measure visibility and fix perception gaps.

Once an organisation runs systematic tests, it will discover surprises: a product described as conservative appears as “high risk”; an active strategy is summarised as quasi-passive; liquidity is misunderstood; or a governance approach is simply absent. These are not philosophical problems. They are repair jobs: clarify risk framing, standardise language, add missing proof points, retire stale documents, and align the public narrative with the regulated one.

Govern the system like a regulated process because it is.

If agents are being used for client-facing work, governance cannot be optional. That usually implies citation requirements, logging of sources and reasoning, allowlists of approved documents, and human approval gates for deliverables. The goal is scale without compliance debt: faster drafting and discovery, but with accountability preserved.

Not robo-trading “always-on advisory”

The end point is not a machine replacing human judgement in capital allocation. The more realistic trajectory is a layer of “always-on” advisory support: systems that continuously monitor, frame scenarios, draft memos, check constraints, and keep a log of why a recommendation was made with humans retaining responsibility.

In that world, the competitive frontier is less about who has the slickest deck and more about who has built the cleanest semantic infrastructure: the governed set of documents, definitions and proof that determines whether an AI system can select, cite and operationalise a strategy.

For decades, finance competed on talent and models. It will keep doing so. But as agents become a normal interface to research and due diligence, another contest is beginning quieter, more procedural, and potentially decisive: the fight to be legible to the machines that increasingly shape the shortlist.